Article "Efficient Request Queueing – Optimizing LLM Performance"

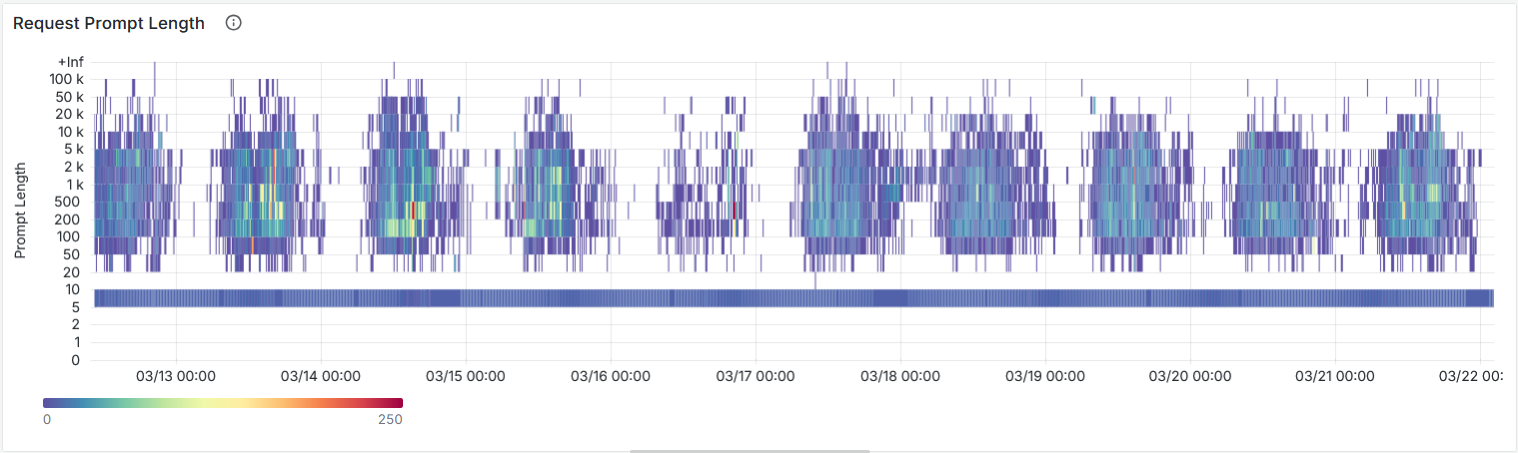

Serving Large Language Models to multiple applications and users in parallel is challenging because they compete for limited GPU resources. In the first of three articles, our colleague Benjamin Merkel explores common challenges in request queueing and discusses fair scheduling as potential solution, including priority-aware and metric-based scheduling.

Read the full article "Efficient Request Queueing – Optimizing LLM Performance" here.